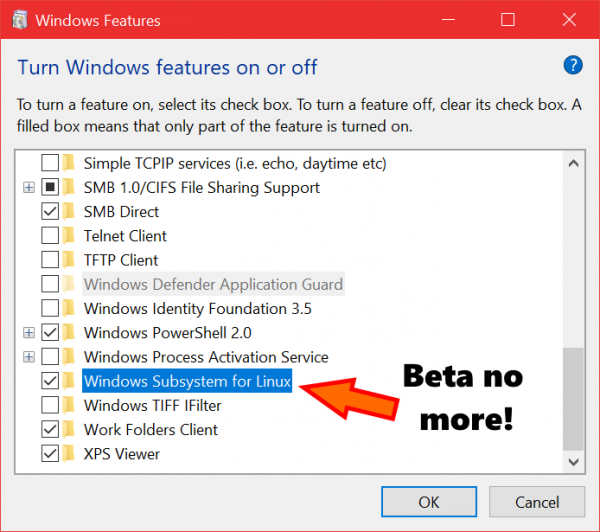

When the Windows 10 Fall Creators Update ships later this fall, Windows Subsystem for Linux (WSL) will leave beta. The company announced WSL will be a fully supported Windows feature in the upcoming update.

“This will be great news for those who’ve held-back from employing WSL as a mainline toolset: You’ll now be able to leverage WSL as a day-to-day developer toolset, and become ever more productive when building, testing, deploying, and managing your apps and systems on Windows 10,” the WSL team wrote in a post.

According to the team, not much should change other than the beta label. The team will continue to respond to bugs and issues posted to the GitHub repo, as well as monitor and contribute to discussions. Going forward, the company plans to improve the product quality by learning what works and what doesn’t work for developers.

Upcoming shortcut features in Android O

There are a number of new shortcuts and widgets developers will be able to leverage with the upcoming release of Android O. Shortcuts can provide users with a quick stat to a specific tasks and widgets can give them instant access to actions and information. According to Google this helps increase user engagement for developers.

The new capabilities include the ability to add shortcuts and widgets from within an app, improvements to the user interface and experience, and a new option to add a specialized activity shortcut.

More information is available here.

Mozilla takes on speech-to-text

Mozilla has launched a new effort to make it easier for developers to create speech-to-text (STT) applications, and create an open audio file ecosystem. The Common Voice Project aims to tackle the fractured speech recognition landscape by making voice recognition available to everyone, and supporting the W3C’s Web Speech API.

“Powerful tools like artificial intelligence and machine learning, combined with today’s more advanced speech algorithms, have changed our traditional approach to development. Programmers no longer need to build phoneme dictionaries or hand-design processing pipelines or custom components. Instead, speech engines can use deep learning techniques to handle varied speech patterns, accents and background noise – and deliver better-than-ever accuracy,” the company wrote in a post.