I’ve long been a fan of the “You get what you measure” school of metrics doubters, especially in agile projects. It’s really easy to decide to measure something, have the people being measured act in a totally predictable and rational way, and screw up a team beyond belief. For example, if I measure how productive individual programmers are, then it’s to the advantage of individuals to focus on their own work and spend less time helping others. That completely kills teamwork.

On the other hand, I can definitely see times when metrics are absolutely critical. As a custom development organization, we need to be able to estimate cost and completion dates for prospective clients in order to win the work. And once we’ve started working on projects, we need to know, for our own sanity, how closely we’re following those estimates.

The challenge is to come up with the right set of metrics that satisfies the very reasonable management request to know where we are in our project, to do so in a way that is easy and unobtrusive to the team, and to avoid counterproductive, metrics-influenced behavior by the team.

What follows are the basic metrics I’ve been using to meet that challenge. These metrics are balanced between measuring things that meaningfully describe what’s happening inside teams, and creating the right environment and behaviors that will help the team reach its goals rather than incent poor behavior.

As a final point before looking at the metrics: All metrics can be misused, so it is important to establish the right culture around measurement and how those measurements are used with managers throughout an organization. The first time a metric is used to judge, punish or reward is the last time that metric will be useful. Metrics are signals or warning signs about where attention should be focused. They do not have meaning in and of themselves; they are mere guideposts for where we should spend our time learning.

How fast are we going?

The gold standard of progress on an agile team is its velocity. This can be measured in story points per week for teams following a more “traditional” agile process like Scrum or XP, or tracked in cycle time for those teams that have evolved to a lean or Kanban approach. The team I’m coaching now is somewhere in the middle, closer to “Scrumban.”

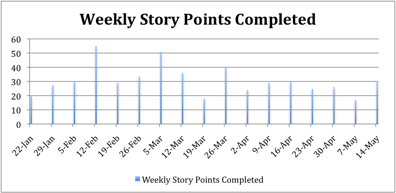

Our standard metric is points per week. Our measurements are reasonably accurate, varying between 25 and 30 points a week. We have the occasional week when we’re significantly below this and some weeks where we way overachieve. Our historical velocity chart looks like this:

At first, our velocity varied a lot. This was almost entirely because the project came to us with several million lines of code, most of it below what we would consider reasonable quality. In legacy codebases, with all the different code smells to be found, it is really hard to accurately estimate anything due to the surprises hiding behind every method call. As we worked our way through the code, refactoring and improving things as we went, our velocity began to become more predictable as the code became more malleable.

What about quality?

Measuring velocity is not enough, as teams can find ways to go faster when pressed to do so. They can cut corners on their “Done” list, which makes stories take less time seemingly, or they can just inflate their estimates to make each point worth less but require less work to implement. Both are perfectly reasonable strategies for teams that are pressured to “go faster!”

There is obviously another side to that “go faster” exhortation. In the former case of cutting corners, we should see quality drop very quickly, followed eventually by velocity. Quality drops because the team neither puts as much care into writing tests for what they’re building, nor spends as much time on anything that takes time away from writing more code, like refactoring or code reviews. This leads to more rework time and puts us back into that unpredictable state where this project started, with poor code quality. Clearly this is not a good outcome!

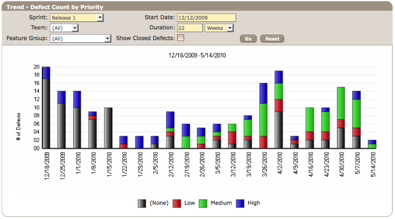

What I’ve found is that the best way to detect when teams are going faster by cutting corners is to keep an eye on quality too. So, in addition to tracking velocity, I also track defect counts. If the team has cut corners to go faster, that will show up as defects pretty quickly, creating a negative feedback loop. The drop in velocity comes a bit later, but not much, as the smells creep back into previously odorless code. This leads to the goal of maximizing velocity while minimizing defects, which is a much more reasonable target for a team.

This chart shows both the total number of open defects per week as well as the relative number of high-priority versus lesser-priority bugs in the system. Some of the variance in the counts was caused by legacy defects found in the existing code base, but there was another reason as well, which we’ll describe in a moment.

Looking at the bigger picture

While both metrics are important on their own, the best analysis is to use these two metrics together to help us understand what’s happening on the team and elicit questions about what may be causing ebbs and flows in velocity or quality. As shown in the bar graph, we did a good job of keeping the defect count pretty low during February and March, but the count crept back up pretty quickly to 20 or so active defects by the beginning of April.

It was toward the end of this relatively quiet period that the team began to feel pressured to deliver more functionality more quickly. They did what they thought was best and focused more on getting stories finished without spending quite a much time on making sure everything worked. They worked to what was being measured.

Since we had these metrics, I was able to see this happening fairly quickly. I was able to point out what was happening with quality and, with the team’s help, relate that back to the pressure they were feeling. Together we all came up with the idea that velocity and quality have to improve together, or teams will create false progress. I helped the team manage this mini-issue on their own by pointing it out to them through metrics.

The most important point about metrics

Metrics should never be used to judge a team or form conclusions about what is happening. They should be treated as a signpost along the way, an indicator to broadcast items not easily observed in real time, and items that can be measured to point out trends. They should always serve as the starting point for a conversation—that is, the beginnings of understanding an issue.

If a manager or executive begins to use metrics as a way to judge a team, the metrics will be gamed and played from that point on. They will become useless because they will be a reflection of what reality the manager wants to see, rather than what is truly happening. In each and every one of these cases, I have used the metrics to confirm what I thought I was seeing as the starting point of a conversation with the team about what they saw happening, and as a way to measure the impact of issues on the team. After speaking with the team, the metrics are also useful for judging whether the changes chosen by it are effective. But they should never be used to judge people.

Metrics are no substitute for being on the ground with your team, feeling the vibe in the team room, pairing with developers and testers, or working with product owners. And let us not forget, projects are most successful when they focus on people over process.

Brian Button is the former VP of Engineering and Director of Agile Methods at Asynchrony, a company that specializes in agile software development. Brian instituted the Asynchrony Center of Excellence, leading a group of agile trainers and mentors that train, innovate, and evangelize agile to internal staff and project teams at outside corporations.