It is hard to talk about Big Data without talking about management, integration and warehousing matters. There are just so many things that are involved in data management. Almost all businesses have Big Data projects that are currently in the pipeline. Handling Big Data is a major headache. There are so many things that you can do in order to have an easier time when it comes to handling Big Data, including the development of a data warehouse.

Of those Big Data projects in the works, however, only a handful of them that are actually incorporating IT management best practices to get maximum benefit from their data. The reason why businesses are all about Big Data nowadays is because many are waking up to the realization of what an asset data is. When data is managed well and IT efforts are executed correctly, the results are always amazing.

Here are some best practices for Big Data management that will benefit your business greatly.

1. Set up a data warehouse

Handling Big Data will require massive storage space, and what better space than a data warehouse? A data warehouse is simply a central point where all the business data from disparate sources is stored and integrated. Setting up a data warehouse will make data management so much easier. You will be able to access data quite effortlessly. On top of this, it will make data analysis a walk in the park.

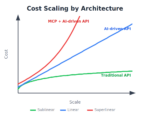

The only problem with data warehousing is the cost that is involved. Setting up a data warehouse is more like building a real warehouse online in the cloud. The costs are high, so you need to get it right from the beginning because repairs can be prohibitively pricey.

2. Agile and prototypical approaches needed

It is important to note that Big Data projects are always naturally iterative. They therefore require prototypical approaches. Sandbox environments that make it possible for data analysts to query Big Data fast and then publish the results are of great importance to the Big Data value process. The data that these queries operate upon is not structured neatly into some fixed-record-length systems such that you know what the final result will be. Therefore, it is necessary to have an iterative process that will operate in such an unpredictable data environment.

3. Public clouds also work

Most large businesses will shy away from using public clouds primarily because of security and governance issues. However, in most cases, public clouds provide an environment that is ideal for speedy Big Data analytics prototyping. This is as long as you get your prototypes off the public cloud the moment you are done running them. Public clouds are also an economical place to store and archive your raw Big Data. The use of public clouds, however, should be clearly articulated in your IT policy.

4. Get an administrator

You will always need a database administrator to keep a close eye on your data. These are professionals who handle all sorts of issues related to databases and their processes. There is technology available to make this work easier, but then you will always need experts to keep your data safe and healthy. You have definitely heard of data getting distorted over time. This is because of poor data-management practices. With a database administrator, this will not be a problem at all.

5. Trim your Big Data

There is a habit of companies keeping all their incoming Big Data in its raw form. This is simply because most of it may never be used. Well, they are justified when they operate on the concern that some future queries might need the data that is not being employed today. Therefore, keeping all the data in raw form is a way of staying safe.

Nevertheless, it is very healthy to decrease the amount of Big Data that you are accumulating. Some of this data (such as the overhead data from website interactions) will probably never be used. There are also machine handoffs and the jitter from the network. You should develop a criteria and methods for taking away the data that you consider strategically unnecessary for both long and short terms. This will significantly lower the cost of storing it all.

6. Set some governance standards

Based on the Big Data business use cases, you can establish governance standards. Who is it that should have access to the data and how much access should these individuals have? Aside from authorization, privacy of the data is also an issue. Is the data tolerable on public clouds? You should define government guidelines, and it should be among the first things to do in Big Data projects.

7. Prepare for disruptions

It is common knowledge that even the best technologies can fail sometimes. Business and Big Data disruptions should be accepted as a way of life, and thus prepared for. This can be done by enacting strategies and architectures that are adequately flexible to accommodate new developments. This way you will be able to sort out any disruptions as soon as they occur. The worst thing is having your business close down for several hours because the system is not working or something went amiss. Losses do not tickle any business owner’s fancy.