An informal survey of DevOps teams finds that the vast majority of enterprises only have bandwidth for some form of manual testing and do not have the skilled resources required for full test automation. Most companies do not even conduct a formal cycle for user acceptance testing (UAT). They conduct informal manual testing by business users instead. This article presents challenges and solutions for UAT, but these can apply just as well to companies who are doing informal manual testing.

There are several pain points experienced in the manual testing cycle:

- Users must be trained on new functionality in order to test

- Limited availability of business users

- Defect reporting

- No test automation created

Let’s dig in a little to each of these and then take a look at how a new type of testing tool and Gen AI can address these issues.

Training – Users may understand their current processes, but must be trained on how the new process will work before they test them. The business analysts who design the updates often don’t have the resources needed to create the documentation and fully educate the testers before they begin.

Limited Availability – The business doesn’t have time for all users to test every new feature in a release. So individuals must be assigned to different features and given enough clarity on what to test. A proper UAT requires a test plan for each of the manual testers that ensures all new features are covered by users who will actually use the features in their work.

Reporting Defects – Developers often need to know what exact steps were taken and what values were entered in each step to duplicate the problem and even determine if it is a true bug. Users often forget the steps they took and the values entered, especially when there are multiple screens and steps. Developers also expect the bugs to be reported in the applications they use for bug tracking. Business users generally don’t know these tools. So the team conducting the UAT will often create a shared spreadsheet for users to log defects or set up an email alias or slack channel to report issues. This is problematic as well, since it is very easy for users to forget to provide information that is critical for developers to duplicate and address the issues.

No Automated Tests – When UAT is complete the product is tested, but there are no automation scripts as a result. So the next round of testing will also be manual.

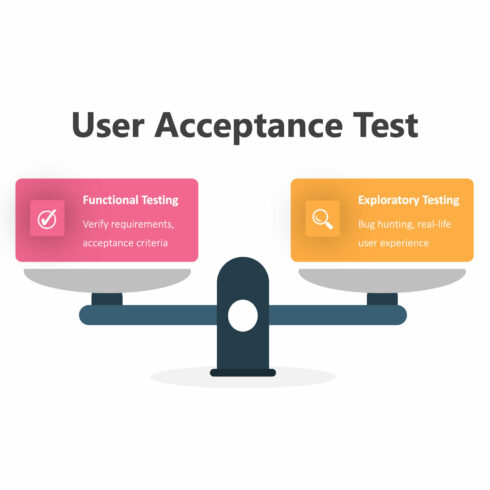

Exploratory Testing

What if you could do manual testing, just like you do now, address the issues above, and with little to no extra effort, create automated tests that could be added to your regression suites?

Exploratory testing is a methodology based on exploring all aspects of a new feature. Most development teams are only concerned with testing the “Happy Path”; that is, confirming that the feature does what it is supposed to do. If you can demonstrate that it works for a simple case, then it’s done, right?

Subject matter experts (SMEs) know how to explore all of the different pathways a user might take to accomplish the task. They try boundary values for inputs like using dates in the past when a future date is expected, anything to try and break the code. Unfortunately, the SMEs typically can’t write scripts even if they did have the time.

Many modern testing tools have a recording capability that captures a click stream. These are used as an alternative to scripting, but are generally employed when a person is intentionally authoring a test. The test author has a step by step process in mind, so they record the clicks and values instead of writing Selenium. This works well if you know what you are trying to create. Unfortunately the people using these tools are not SMEs.

In the music business, many recording groups tape their jam sessions. Often a musician plays a guitar lick and the other band members say “Man that was great! Play it again.” Unfortunately the musician cannot remember what they just played. But the recording engineer plays back the tape and there it is. Many of the most famous guitar riffs in classic rock were happy accidents that thankfully got recorded during rehearsal and included in the final version of songs.

A new breed of exploratory testing tools are like that. They are optimized to capture an exploratory test session like the tape recorder in a studio. When a defect is found the tester can “rewind” the tape to the beginning of the sequence and highlight the steps that led to the defect. With an exploratory testing tool, the highlighted steps generate a package of screenshots and a video that can be posted into the bug tracking software through an integration. The tester doesn’t even have to know how to use the bug tracking tool or have an account. They can even annotate the screens simply by drawing on them with their mouse and add notes to make it clear what was expected.

After this defect report has been logged, the exploratory testing tool can generate an automated test that can verify the fix after it is made. This test can be provided to the developer to verify the fix, and also to the QA team for inclusion in the proper regression suites.

Gen AI

Generative AI can also help. AI tools can automatically generate the documentation needed to document the new feature. In fact, AI tools can generate a testing script for the happy path of a feature and create a step by step video demonstration of the new feature. These may not be production level materials, but they are 90% of the way there and generally much better than what business users get today.