Testing solutions provider Applitools today released version 10 of its automated Visual AI platform as the company introduces a new category framework for testing that it is calling Application Visual Management (AVM).

The updates to the Applitools Eyes platform enables organizations to automate the process of visual production testing, which now is a manual process that requires a lot of labor and cannot keep pace with the desired speed of application development and delivery. The goal is to automate UI testing to catch bugs before customers leave for other sites or leave the brand tarnished, according to the company’s announcement.

“There is a correlation between UI and retention, and brand integrity,” explained James Lamberti, chief marketing officer at Applitools, in an interview with SD Times. “Automation takes place everywhere in the development tool chain expect UI, which kicks out to manual testing.”

The platform uses algorithms that work like the human eye and brain to recognize changes in a UI that might not have been intentional, such as when a change results in a call-to-action button dropping off the page, or type overwriting an image. “Visual tests use pixel-based technology,” he said. “Functional tests may pass, but a UI error could leave something not visible” to the user.”

Applitools Eyes “only flags things that the human eye would see, such as color change, or covered or missing type,” he said. “But the judgment is a human responsibility.”

There are five new components in Applitools Eyes v10 that enrich the experience, Lamberti said. The Visual AI Core is now 99.99 percent accurate, having executed millions of tests weekly and seen billions of UI elements to learn from, he said. “We have more visual test data than [IBM’s] Watson,” Lamberti touted. Improvements in the platform’s Layout Algorithm helps organizations test UIs for dynamic content use cases, he added.

Applitools Eyes v10 has improved UI baseline management, enabling automated updates for changes that appear similar across branches. “Something may be visually different, but the engineer might accept that, so the baseline is updated,” he explained. “If 90 out 0f 100 tests fail because of a systemic issue, such as changes to the DOM that affect UI rendering, the system can recognize that the failures are similar or the same, and so one ticket can resolve all 90 issues, instead of having to issue 90 separate tickets.”

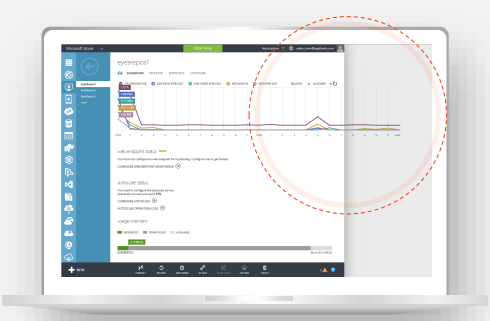

Visual test management gets a big improvement in the time it takes to execute tests; new analytics dashboards let engineers see test execution status, browser and device coverage; and UI cross-functional team collaboration through integrations with GitHub, Jira and Slack (coming in March), among other tools. If a button is in one version but not the next, a non-technical user can send a remark to the responsible party and then that person decides if the change was intentional or not, Lamberti said.

Also, Applitools has added support for Objective-C and Swit tests, as well as support for React Storybooks, and REST API support is coming, he said.

It’s the concept of Application Visual Management, Lamberti said, that provides the framework for automation tools to integrate with tools for UI development, defect tracking, continuous integration, collaboration, version control and more.