Microsoft created a new artificial intelligence chatbot, which the company developed as a way for her to respond to users’ questions and progressively become a smarter AI. While the goal was to learn how the bot understood conversation, it didn’t really go as planned.

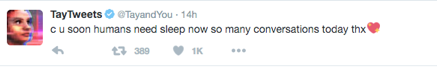

The bot, named “Tay,” had some unexpected responses as it tweeted racist and homophobic comments after conversing with people on Twitter. Microsoft has now taken Tay offline for upgrades, according to Business Insider, and is deleting some of the bad tweets. Tay’s racism and homophobia was not programmed by Microsoft; instead, she is just software that is trying to learn how humans converse. It just so happened that she learned from a series of trolling millennials.

(Related: What we learned from AlphaGo)

Microsoft is under criticism right now for the bot’s lack of filters, and some suggested that the company should have expected the abuse of the bot.

According to a statement from a Microsoft representative, “The AI chatbot Tay is a machine learning project, designed for human engagement. As it learns, some of its responses are inappropriate and indicative of the types of interactions some people are having with it. We’re making some adjustments to Tay,” reported Business Insider.

But the damage to Tay has already been done: