The latest version of the open-source machine learning library PyTorch is now available. PyTorch 1.7 introduces new APIs, support for CUDA 11, updates to profiling and performance for RPC, TorchScript, and Stack tracers.

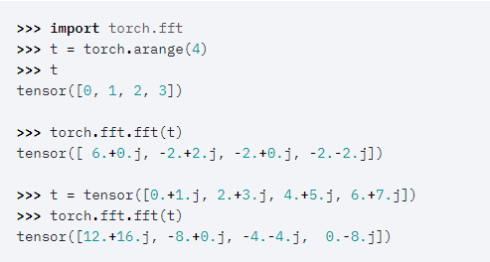

New front end APIs include torch.fft, which is a module for implementing FFT-related functions; C++ support for nn.transformer module abstraction from the C++ frontend; and torch.set_deterministic, which can direct operators to select deterministic algorithms when available. These new APIs are all currently available in beta.

Performance updates include the addition of stack traces to the profiler, which allows users to see not only the operator name in the profiler output table, but also where the operator is in the code.

In addition, TorchElastic is now a stable feature. TorchElastic provides a strict superset of the torch.distributed.launch CLI. It includes added features for fault-tolerance and elasticity.

Other distributed training and RPC features include beta support for uneven dataset inputs in DDP, improved async error and timeout handling in NCCL, the addition of TorchScript rpc_remote and rpc_sync, a distributed optimizer with TorchScript support, and more.

More information on PyTorch 1.7 is available here.