Any discussion of risk-based software testing invariably leads to HealthCare.gov, the healthcare exchange website that’s the most prominent face of Obamacare. The site is almost certainly the most obvious software-related failure in recent memory. Its botched rollout last fall generated cringe-worthy stories that continued into 2014.

It doesn’t take any sort of software genius to declare HealthCare.gov a total flop, at least at launch. Talk to those who advocate risk-based testing, though, and you’re likely to hear the same two conclusions about the coding disaster that helped to knock President Obama’s approval rating down to the lowest point in his presidency. First, never think of the cost of a faulty subroutine just in terms of the technical headache it will create. Second, always insist on honest discussions about risk right up the management chain. And by the way, expect both bits of wisdom to be routinely subverted due to fellow programmers, higher-ups and broader trends in technology, including a vastly accelerated schedule of code drops.

“There’s no one in the world today that’s developing product who has enough time or other resources to do enough quality assurance,” says Bryce Day, founder and CEO of Catch Software, based in New Zealand.

Focus first on what matters most

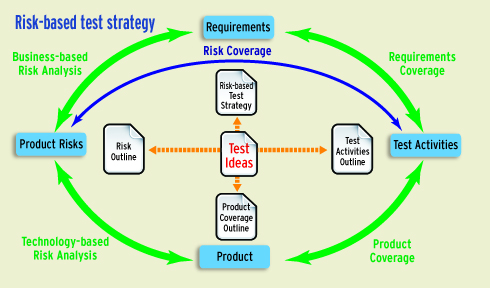

Given these constraints, it follows that testers need to make a prioritized list of potential bugs, based on risk. This indeed is the hallmark of risk-based testing. But how do you generate this list? The most common way is to do some sort of mathematical analysis where risk is calculated as the frequency of a given operation multiplied by the impact if that operation fails. (Day elaborated on this definition in a 2012 blog post, “Risk: A four-letter word for quality management?”)

Sounds easy enough, but Day said many software types make a mistake when they apply this sort of thinking just to their blocks of code. Risk-based testing is not about thinking of a path through a piece of code, he insisted, but rather about the path through your business.

To illustrate, Day used the example of software for an ATM. The frequency of users entering their PINs is high, probably nearly 100%. And given that ATMs are basically unusable if an entered PIN isn’t recognized, that means the risk associated with a faulty PIN process is quite high as well. So if you’re writing code, make sure the process of entering PINs is tested six ways to Sunday.

Day’s point is that if you focus on your overall business operation instead of just the workings of your application, it’s easier to arrive at reasonable guesses as to the impact of potential problems. ATMs that botch handling of PINs cost their owners lots of money. ATMs that get buggy when users try to switch to the Portuguese or Polish interface cost their owners less. Neither outcome is good. But obviously testing should focus on any problems with PINs first.

Day’s point is that if you focus on your overall business operation instead of just the workings of your application, it’s easier to arrive at reasonable guesses as to the impact of potential problems. ATMs that botch handling of PINs cost their owners lots of money. ATMs that get buggy when users try to switch to the Portuguese or Polish interface cost their owners less. Neither outcome is good. But obviously testing should focus on any problems with PINs first.

Like many who preach the merits of risk-based testing, Day said doing some basic calculating of expected costs is a good starting point. However, what’s more essential is insisting on open, direct conversations about risk that go right up the management chain. It’s relatively easy for front-line developers to dial up or down their testing efforts based on the consensus about the appropriate level of risk. The hard part is reaching that consensus in the first place.

One reason agreement is difficult is that feelings about risk naturally differ depending where you sit in your organization’s hierarchy. Most individual developers and their immediate supervisors are highly risk-averse. Makes sense, given the closer you are to the technology, the better you understand how many ways things can go wrong. Move up the chain, though, and you will soon get to management types motivated, at least in the private sector, to shrink time to market and boost sales. And in the public sphere there are the related concerns of gaining advantage in public perception and endless political jockeying.

“In government… the currency by which we measure return on investment is politics,” wrote former NASA CIO Linda Cureton in a Jan. 6 Information Week commentary.

A lack of understanding

Another problem is that understanding of risk and complexity among business and software engineering types alike hasn’t necessarily kept up with advances in software generally. The increase of pattern-based languages and frameworks—think Ruby and Ruby on Rails as just one example—makes it easier for relative novices to quickly build Web apps. And the stories of those youthful self-taught programmers who hit it big get set in tech lore like insects in amber.

Self-taught college dropout Jack Dorsey, who claimed programming to be “an art form” and famously took drawing and fashion-design classes even while serving as Twitter CEO, became a billionaire with Twitter’s 2013 IPO. It’s reasonable to ask: If this would-be dressmaker can hit it this big, how hard can anything to do with programming—including testing—be?

Programmers advocating a rigorous approach are under siege from within their own ranks, too. Day pointed his finger at the most zealous advocates of agile programming as the biggest culprits. Like all zealots of any persuasion, those advocating iterative and incremental development see their way as the answer to nearly any question. He said the agile attitude seems to be “Don’t worry about bugs in the release; we can fix any that sneak through in two weeks, tops.”

Unfortunately, two weeks may be too much time, and not just for high-profile projects like HealthCare.gov or Windows 8.1, which suffered its own rocky rollout last fall. A series of blog posts on Appurify, a Google Ventures-backed startup in San Francisco working on mobile test automation, described how buggy code can sink user ratings, and thus discoverability and rankings, on the Apple App Store. This is relevant even for popular, well-established apps since, as Appurify’s chief data scientist Krishna Ramamurthi wrote in one post, “The ratings for ‘Current Version’ are featured more prominently on search engine results, and arguably matter more to the average consumer than ratings for ‘All Versions.’ ”

Nischal Varun is director of testing services at IT services firm Mindtree, which earns about a third of its US$430 million in annual revenue from testing. He agreed with most of what Day said about software testing trends, though he said there’s another factor worth considering: increased government regulation, particularly in healthcare.

In September, the FDA issued new guidelines for its oversight of medical apps. Apps subject to regulation—those intended to be used as an accessory to a regulated medical device, or to transform a mobile platform into a regulated medical device—now have to navigate the FDA’s own tailored risk-based approach.

The future, Varun believes, is to move away from having to assess risk from scratch for each project. Mindtree, which focuses on business verticals such as insurance (including regulatory risk and compliance), is exploring how it might offer customers prepackaged risk frameworks, tailored to specific industries or types of applications. An example: Mindtree might approach a bank and its software vendor and say “Look, here are the typical 100 requirements for this type of project, and here are the typical risks associated with each requirement.”

Keep reading up on risk and you’ll eventually stumble on a basic truism, one that applies far beyond the world of software. Human beings are generally lousy at assessing and pricing risks, especially those risks that are very unlikely though catastrophic if they do happen.

Here’s an example. Pick one game to play; you have five seconds to choose. Your first choice: Flip a coin and give me $100 if it comes up heads. Your second choice: Shuffle a deck of cards, flip the top one over, and give me $3,000 if it comes up queen of hearts.

Most people instinctively think something like “well, it’s 50-50 that I’ll be out $100 if I flip a coin and there’s almost no chance that I’ll pick the queen of hearts at random, so give me the deck of cards.” In fact, the card game is the more expensive option. Your expected cost in playing is nearly $58, or $3,000 divided by 52, the number of cards in the deck; the cost of the coin toss is just $50.

There are loads of examples of this in the wider tech world, where systems get much more complicated than a random deck of playing cards. And because we’re so bad a pricing risks, James Bach looks askance at most approaches to risk-based testing that come with an ostensible veneer of mathematical rigor.

A more moral testing regime

Any discussion of risk-based software testing eventually leads to Bach, whose 1999 article “Heuristic Risk-Based Testing” still appears near the top of any Google query on the topic. Bach, himself a high school dropout, has the kind of career that’s only possible in the world that software helped to create. He travels the world teaching and consulting on software testing, though when he’s home on Orcas Island, Wash., he’ll offer free coaching for anyone who contacts him via Skype. (His user name: satisfice.)

Bach doesn’t hold back when asked about the usefulness of doing basic calculation of risk when building a test plan: “A lot of people do risk-based testing by coming up with [fake] numbers and multiplying them together in [stupid] ways. That’s not mathematical. It is ritualistic, unscientific and unnecessary.”

He bases his complaint on the fact that assigning actual values of either likelihood or cost of a given bug is astonishingly difficult, which causes most teams to use arbitrary and highly subjective scales for each. One common example is using a 1-10 scale to rank both probability and impact of a list of possible bugs. Multiplying the two numbers together gives a prioritized list, but given the garbage-in/garbage-out nature of the data, the ordering is invariably inaccurate or even meaningless.

Instead of faux calculations, Bach urged his clients to interrogate their code by just thinking through all the ways things might go wrong, more like a prosecutor than a programmer. There are many methodologies to pick from, but basically it comes down to systematically working through a series of open-ended questions, much as you would with your teenage daughter who wants to go to a dance with a boy you’ve never met. And whether it’s the prom or release date that’s looming, clear-eyed honesty may be the most important criteria of any test methodology.

Speaking of honesty, it brings us full circle to HealthCare.gov, a topic about which Bach, unsurprisingly, has strong opinions. He expressed many of these in a Nov. 13 blog post, “Healthcare.gov and the tyranny of the innocents,” that lambasts everyone in the management chain who was both clueless about code and unwilling to listen to complaints from their more technical subordinates.

However, Bach doesn’t spare these front-line technologists either, especially as it’s come out that the testing that was done before the site’s launch confirmed that it was nowhere near ready: “Why didn’t you go public? Why didn’t you resign? You like money that much? Your integrity matters that little to you?” he wrote.

Is risk-based testing really as much about morals as methodology? Maybe, though even Bach, who seems to have built his reputation on radical candor, admitted that it’s sometimes hard to do the right thing. And these lapses come with a cost of their own, one that that may be hardest of all to put a price tag on.

“I don’t always live up to my highest ideals for my own behavior,” he said, “and when that happens, I feel shame.”

G. Arnold Koch is a writer in Portland, Ore. His last article, “The release management tug of war,” appeared on SDTimes.com in August 2013.