The world of software testing has always been a mishmash of ideologies and methodologies. A diverse worldwide testing community has adjusted and innovated techniques independent of any unified set of standards, sharing philosophies and ideas but not common practices.

ISO 29119 aims to change all that, and in the process has set the testing world on fire.

The software testing community is in the midst of a deep and contentious rift, with ISO 29119 at its heart. The International Organization for Standardization, in conjunction with the International Electrotechnical Commission (IEC) and Institute of Electrical and Electronics Engineers (IEEE) has put forth ISO/IEC/IEEE 29119—ISO 29119 for short—a set of standards governing testing processes, documentation, techniques and keyword-driven testing designed to create uniform testing practices within any SDLC or organization. Parts one, two and three of the standard were published in September 2013, with parts four and five on track for publishing in late 2014 or 2015.

While testers have spoken out against the standard’s creation for years, recent times have seen the debate and controversy escalate to a breaking point with the growing momentum of the Stop 29119 petition. The petition, which eclipsed its goal of 1,000 signatures in less than a month, aims to block the ISO or anyone else from making a legally defensible claim that their “standard” represents a consensus of experts in testing, and ultimately to strike the standard down.

(Related: More about the ins and outs of testing)

The petition has brought this debate to the fore in blog posts, forums, social media and testing communities, all arguing about the standard itself, the testing philosophies perceived to be represented (or not represented) within, and whether testing needs or would even be affected by a standard in the first place.

Behind the petition is an organization called the International Society for Software Testing, formed right before the first parts of ISO 29119 were published. The ISST’s mission was founded to promote the philosophy of context-driven testing and the value of tester skills, and to oppose the over-automation of testing or practices that, according to the ISST website, “are wasteful or seek to dehumanize testing.”

Its mission hits on one of the core drivers behind the movement: testers who’ve always tested as they saw fit using a variety of techniques and methodologies will naturally lash out against a group and viewpoint threatening to fundamentally change how they work.

“The petition to stop [ISO 29119] is just the idea that if this becomes broadly accepted, there are already other organizations like ISST that have published similar standards and terms,” said Gartner analyst Thomas Murphy, an IT expert with decades of experience covering software development and delivery. “I don’t know that the ISO standard would all of a sudden make these invalid, but I think of it as protectionist and [against] the idea of a special group setting standards.”

Anatomy of a testing standard

ISO 29119 didn’t happen overnight. In May 2007, the ISO formed WG26, a working group to develop new standards on software testing. In the past seven years, WG26 has held twice-annual meetings, presented at testing conferences and workshops, and gone through multiple drafts of each part of the standard as the process has run its course.

According to Stuart Reid, convener of WG26 and CTO of the U.K.-based Testing Solutions Group, ISO 29119 builds upon past ISO and IEEE standards that touched on but didn’t directly concern testing. The goal was ideally not to reinvent the wheel, but to build upon and stitch together established practices.

“Up until last year, there was no definitive international set of software testing standards,” Reid said. “There are standards that touch upon software testing, but many of these overlap and contain what appear to be contradictory requirements with conflicts in definitions, processes and procedures. There were large gaps in the standardization of software testing, such as organizational-level testing, test management and non-functional testing, where no useful standards existed at all.

“This means that consumers of software testing services and testers themselves had no single source of information on good testing practice. Given these conflicts and gaps, developing an integrated set of international software testing standards that provide far wider coverage of the testing discipline provided a pragmatic solution to help organizations and testers.”

In recounting the road to a complete standard, Reid explained that because of the evolving nature of the testing industry, many parts of the standard such as Test Processes were devised from scratch to ensure that factors like agile life cycles and exploratory testing were considered. The Keyword-Driven Testing standard only came into existence in 2012, and a working draft of an as-yet unapproved standard on Work Product Reviews is still without a timeline for publishing.

The working group has a long list of co-editors and members, consisting of academics and testers from universities, startups, companies large and small, and even governments. Two dozen countries are represented as well.

“The question is who recognizes this standard and who created the standard,” said Gartner’s Murphy. “Often many of these standards are also looked at as ivory tower and process-centered, not reality-based or agile-driven.”

In reference to the WG26 co-editors, Murphy said, “You do get a couple consultants and academics. Also since Mindtree [general manager Sylvia Veeraraghavan] was an author, it will be interesting to see if acceptance comes from the larger testing service providers.”

Still, Reid stressed the working group’s focus on the openness and comprehensiveness of the standardization process. He detailed a “mirror panel”—similar to the concept of an electoral college—where WG26 members represent and consult with their home countries, testers from a specific industry, specific roles such as testing consultants, or testing interest groups to review and comment on drafts of each standard, all in the name of “consensus.”

“Members of [WG26] are well-versed in the definition of consensus,” Reid said. “The six years spent in gaining consensus on the published testing standards provided us all with plenty of experience in the discussion, negotiation and resolution of technical disagreements.

“However, we can only gain consensus when those with substantial objections raise them via the ISO processes. The petition talks of sustained opposition. A petition initiated a year after the publication of the first three standards, after over six years’ development, represents input to the standards after the fact and inputs can now only be included in future maintenance versions of the standards as they evolve. It is unclear if ‘significant disagreement’ reflects the number of professional testers who don’t like these standards or the level of dissatisfaction with their content.”

The term “consensus” can be a hazy one, though. Rex Black, a software tester and president of test consulting, outsourcing and training firm RBCS, believed that Reid genuinely wants to make a difference in testing, but that the representativeness of the standard is ultimately beholden to a small committee.

“It represents a consensus of the people who participated in the standards committee, just like any standard,” Black said. “That’s always the case, and it’s one of the limitations of any standard or pseudo-standard. You just have to hope those people are reputable and did their homework to make sure they understood what other perspectives are out there.”

The schism

Testing is a fragmented community to begin with. Various schools of thought compete with each other in the marketplace of ideas, with no consensus in the field even among “recognized experts” about the definition of testing. In that light, trying to apply a standard to the craft only serves to split the testing world down the middle.

One of the loudest voices heaping criticism on ISO 29119 is that of software tester, author and speaker James Bach, founder and CEO of test consulting company Satisfice and a charter member of the ISST. He is also a proponent of the context-driven testing philosophy on which the ISST is founded.

Bach’s viral blog post entitled “How Not to Standardize Testing” has made him one of the outspoken faces of the movement, and he doesn’t mince words when describing the standard and the so-called faction behind it. “The imposition of a ‘standard’ by one faction of the testing community, apart from being illegitimate, has the potential to allow them to control who gets to call themselves a tester,” he said.

“It’s important to note that this ‘standardizer’ faction has not been able to win on the merit of their work. They could make their ideas public and promote them at conferences and in training just like the rest of us. I have no problem with such de facto standardization. If these ideas gained acceptance naturally, that would be perfectly fine. Now they want to change the rules of the game by declaring their ideas the official way to do testing.”

Bach outright refutes Reid’s claims of openness and consensus in the ISO 29119 standardization process. According to Bach, WG26 engaged in a deliberate exclusion of the context-driven school of testers. They did this not by blatantly barring them, but through what he called a “structurally exclusive” process designed to ignore dissenting opinion while a small group of individuals make decisions.

The direct confrontation between Bach and Reid dates back to 2006, when the two debated during Bach’s keynote speech opposing certification programs at the inaugural CAST conference.

“Stuart [Reid] was in the audience and he objected, so we decided to reconfigure my talk into a debate format,” Bach said. “I was struck by Stuart’s reluctance to answer the actual points I made. I would say some things, then he would speak as if I hadn’t said anything, then I would answer his points, then he would again speak as if I hadn’t said anything.

“We continued the debate privately at a pub later on. He claimed that there were 35,000 ISEB [Information Systems Examination Board] certified testers, and that constituted evidence that it was a good program… It took me 30 minutes to get him to admit that his ‘35,000 happy customers’ argument had not a shred of scientific basis. It’s the sort of argument a politician makes.”

The criticisms from Bach, the Stop 29119 petition and the ISST have not gone unanswered. In response to the petition, Reid picked out several quotes from its mission and comments to dispute head-on.

“There is a misconception that standards tie users to rigidly following processes and creating endless documentation,” Reid said. “We have tried not to restrict testers in how they perform their testing. The standards require compliant testers to use risk-based testing, but do not restrict testers in how they perform this activity.”

Reid also directly addressed context-driven testing and its place in the standard, specifically mentioning ISO 29119 co-editor Jon Hagar as a context-driven tester who ensured the working group considered context-driven perspectives.

“I fully agree with the seven basic principles of the ‘Context-Driven School,’ ” Reid said. “To me, most of them are truisms, and I can see that to those new to software testing they are a useful starting point. I also have no problem with context-driven testers declaring themselves as a ‘school;’ however, I am unhappy when they assign other testers to other deprecated ‘schools’ of their own making.”

The masses chime in

The Stop 29119 petition embodies prevailing dissent toward the standard, but by no means does it end there. Aside from comments in the petition discussion, recent debates have emerged in places like the Software Testing & Quality Assurance LinkedIn group, on Twitter under the #Stop29119 hashtag, and on the uTest forums of Applause, the largest online crowdsourcing community for software testers. Most testers seem to fall on the dissenting side.

“[ISO 29119] embodies a dated, flawed and discredited approach to testing,” testing consultant James Christie wrote in a uTest blog post. “It requires a commitment to heavy, advanced documentation. Such an approach blithely ignores developments in both testing and management thinking over the last couple of decades. ISO 29119 attempts to update a mid-20th century worldview by smothering it in a veneer of 21st-century terminology. It pays lip service to iteration, context and agile, but the beast beneath is unchanged… This is not a problem that testers can simply ignore in the hope that it will go away… We must ensure that the rest of the world understands that ISO is not speaking for the whole testing profession, and that ISO 29119 does not enjoy the support of the profession.”

Yet when approximating the opinion of the majority, the outspoken opinions of a few must be taken with a grain of salt. For every one of the 1,047 or so signatories of the petition, there are more than 336,000 ISTQB-certified testers who haven’t. RBCS’ Black believes this is a silent majority.

“These guys making all this noise are in no way stakeholders,” he said. “They’re part of the self-identified disciples of this context-driven school of testing. One of their central principles is there are no best practices. It’s almost like they’re born to hate [ISO] 29119 no matter what it says.

“Compare that against over 300,000 ISTQB-certified testers around the world. It’s a drop in the bucket. These context-driven guys, maybe they account for 1% of the community at large. The true believers in standards that’ll go out and follow 29119 to the letter, that’s another 1%. Then there’s the 98% of the rest of us, and almost everybody looked at this whole thing as silly.”

An uphill battle for a standard

A software-testing standard to get the entire sprawling community on the same page does have inherent value. Yet with the craft as contentious and divided as it’s ever been, with Continuous Deployment pressuring testers to automate and the ripple effects of the published standard still unfolding, a true consensus looks like a pipe dream.

“Most people don’t take notice of these standards,” said Gartner’s Murphy. “I have only talked with a few companies in 13 years of calls that have asked about adherence to any previous [quality and testing] standards. Bottom line: I am less worried about the standard for how you test; I am more interested in results. Things like defect containment and a focus on continuous quality, not on testing as a discrete process. Thus you are going to see a lot of pushback from the world of continuous and organizations that don’t think of testing and or quality in isolation.”

Even Reid knows the testing community won’t change quickly, and admits the standard may not gain adoption at all.

“There must be millions of testers across the globe, and in my lifetime I see little chance of the majority of this huge group ‘embracing’ and complying with the standards,” he said. “My vision would be for more testers to use the standards to either improve their testing or give themselves confidence that their testing is already good. I believe these standards will provide a benchmark that supports future innovation and advancement of testing practices.”

Bach believes many testers working for large companies don’t feel free to express their opinions, and that Reid and the standard represent the interests of large companies that may not involve testing more efficiently as it could negatively affect billable hours.

“Stuart once told me, to my face, that he didn’t believe that I really thought differently about testing than he did, but rather that I affected that position in order to market myself as a maverick,” Bach said. “[WG26] needs to honor the differences among us and see if there is even the possibility of bridging what divides us.”

Bach’s view of the testing world is that of “a period of warring paradigms,” where testers can’t even agree on what the word “testing” means or what its scope is. As for the standard, he predicts it would have a chilling effect on young testers and the future of the craft.

“Testing needs to get better,” Bach said. “The standardizers wish to sell our field into bondage to 40-year-old ideas at a time when we need to do our best work. This standard is a formula for determined ignorance.”

RBCS’s Black agreed, adding that while past standards have been a source for useful ideas, very few organizations have made a strict effort to comply.

“I sit with somewhat [amused] watching this enormous conflagration because there’s been an IEEE 829 test standard for documentation since the early 1990s,” he said. “There also was this IEEE 1044 standard for bug management out in the late 1980s and early 1990s. These things have been around forever and have had very limited impact and the 29119 standard was always doomed for the same impact, especially since most testers won’t even see it because it’s behind a paywall.”

Ultimately, Black sees the ISST and context-driven testing camp as a noisy minority who’ve managed to make a big splash on social media. He believes the context-driven school uses categorization to create a dualistic view of the testing world: it’s either this or that. The standard, he thinks, is simply their latest tool with which to polarize.

“This whole situation reminds me of a joke,” Black explained. “This guy goes into a field and he sees someone in the middle of the field waving this enormous pink rag in the air. He goes over to the guy and asks, ‘What are you doing?’

“The guy says, ‘I’m chasing away elephants.’

“He responds, ‘There aren’t any elephants around here.’

“The man then exclaims, ‘See, it works!’ ”

Inside ISO 29119

According to Reid, ISO 29119 aims for three key benefits: improving communication through a common terminology, defining good practices with guidelines and benchmarks for testers and customers, and a baseline to compare different testing design techniques and consistency of test coverage. He made a point of saying the working group doesn’t claim the standards as “best practices.”

The breakdown of each part of the standard, according to SoftwareTestingStandard.org, is as follows:

• Concepts and Terminology: Defines the role of testing, testing processes in the SDLC, risk-based testing, sub-processes, various practices, and automation. Also covers testing in relation to agile, sequential and evolutionary development.

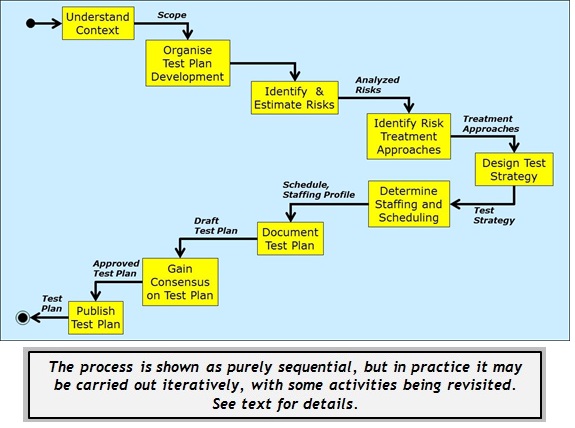

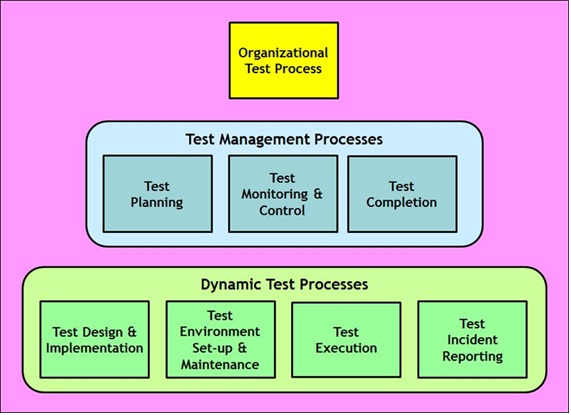

• Test Processes: Models specific processes and workflows to manage and implement testing.

• Test Documentation: Templates for test documentation complete with specifications and requirements for organizational test, test management and dynamic test processes.

• Test Techniques: Defines specific techniques on which to base test cases for specification-based, structure-based and experience-based testing. Due for publication later this year.

• Keyword-Driven Testing: A way of describing test cases by using a predefined set of keywords to perform specific steps in test cases. Covers application, roles and tasks, frameworks, and data interchange for KDT. Due for publication in 2015.

• Test Assessment: Defines a Process Assessment Model to produce consistent and repeatable ratings for measuring the capability and effectiveness of a given testing process. Due for publication either this year or next.

Context-driven testing

According to Bach, context-driven testing was born in the late 1990s during peer conferences in Silicon Valley. Testers from across the software development industry found that focusing on skills and problem-solving allowed them to connect people much better than touting certain practices as “best.”

“In CDT we study methodology,” Bach explained. “We don’t just promote somebody’s pet opinion. We model ourselves after scientific values and practices, as well as philosophical practices, hence calling ourselves a ‘school’ of thought. We believe in applying effective solutions to real problems, and that means developing our skills to do that. We are therefore craftsmen, rather than pitchmen.”

The seven key principles of context-driven testing, as laid out by Bach and professor/tester Cem Kaner are as follows:

1. The value of any practice depends on its context.

2. There are good practices in context, but there are no best practices.

3. People, working together, are the most important part of any project’s context.

4. Projects unfold over time in ways that are often not predictable.

6. The product is a solution. If the problem isn’t solved, the product doesn’t work.

7. Good software testing is a challenging intellectual process.

8. Only through judgment and skill, exercised cooperatively throughout the entire project, are we able to do the right things at the right times to effectively test our products.

CDT’s biggest competitor is the “Factory” school, a rival methodology Bach likened to those behind the standard. “[The Factory school] does not like to call itself a school because to do that would be to admit it wasn’t representing everyone. The Factory school wants to minimize squishy human involvement in testing. They revere procedures,” he said. In equating the Factory school to the testers behind ISO 29119, Bach is quite blunt.

“CDT is better than the Factory approach because there is no evidence that testing can be turned into a rote procedure,” he said. “The very nature of testing is creative and critical thinking. It is a form of design work. It is inherently exploratory. Anyone who thinks they can make this into a factory-like process must offer some theory that justifies this, and some example of how that theory has ever led to success in practice.

“No one does this. Factory school testing is very popular, not because it works, but because it seems easy to manage… We in CDT pride ourselves on being able to explain what we do and why we do it. There is nothing mystical—it’s just a matter of skill. That skill can be systematically taught and learned.”

What about automation?

One glaring aspect of modern software testing only tangentially acknowledged by either side of the schism is automation. The standard defines a few concepts of automation and covers one narrow aspect in regards to keyword-driven testing, and the context-driven testers behind the petition are “concerned about the over-automation of testing,” according to Bach.

“We avoid the phrase “test automation” because testing cannot be automated,” Bach said. “We call that “tool-supported testing” or “automation in testing.” We make extensive use of tools…We think of automation the way skilled carpenters think of automation: we love our power tools, and we can also operate without them. What we are against is the de-skilling of the craft through the process of attempting to automate things that actually can’t be automated.”

In the current testing climate (where the advent of agile and added regression risk of constantly perturbing codebases have increased the need for automation), leaving automated testing out of the argument severely damages its credibility, especially given the proliferation of countless high-quality open-source and commercial tools.

Keyword-driven testing is a test automation framework concerning automated system testing and GUI testing, but that alone does not make the standard a representative guide to automation.

Black believed KWT is an important component of the solution, but only a small part. “When you think of these Continuous Integration frameworks like Hudson and Jenkins, and all the tools you can plug in for static analysis, automated unit testing, test-driven development and behavior-driven development, automation is enormous,” he said.

“It’s not the one solution, but it’s certainly part of it. It’s not either this or that; it’s all of the above. We have to come at a testing problem a lot of different ways, and automation is an effective test strategy especially against regression risks.”